AI is changing how information is created, shared, and used. From news articles to social media posts, content made by AI is becoming a normal part of digital life. This rapid growth raises a significant question for society. Should governments make rules about AI content, or should people be able to be creative and use technology without too many rules?

Why People Are Talking About Rules for AI Content

AI systems can create a lot of videos, pictures, and text. This makes things work better and more creatively, but it also makes it easier for people to misuse them. Fake news, deepfake media, and automated propaganda can spread faster than normal content. Governments say that controlling AI content is important to keep people’s trust, stop false information from spreading, and keep democracy stable in the digital age.

How Digital Laws Keep AI Under Control

Laws already exist to protect privacy, copyright, and safety online. Some people who make laws think that the next step should be to use these rules to run AI. Digital laws that are easy to understand could make it clear who is in charge, let everyone see AI-generated content, and limit how it can be used. Supporters say that rules wouldn’t stop people from being creative; instead, they would encourage people to be responsible when they come up with new ideas.

Worries About Government Control

People who don’t like AI content regulation say it goes too far. Too much control could make it harder for people to speak their minds, slow down technological progress, and make enforcement uneven across regions. People are also worried that governments might use misinformation control as an excuse to shut down voices that disagree with them or that aren’t popular. These fears show that we need balanced AI governance instead of strict censorship.

Finding a Balance Between Responsibility and Innovation

A compromise is often suggested. Governments could regulate how AI systems are trained, labeled, and used instead of controlling the content itself. Ethical standards and demands for openness may help lower risks while still letting new ideas come in. This method changes AI content regulation from limiting it to making it responsible.

Questions That People Often Ask

How does AI content get regulated?

AI content regulation is a set of rules that tell people how to make and share AI-generated content and make it available to the public.

Why do governments want to stop the spread of lies?

Governments want to protect elections, public health, and social stability from the harm that false or misleading information can cause.

Does AI governance stop people from speaking their minds?

It depends on how the laws are written. Balanced AI governance doesn’t try to silence people; instead, it focuses on openness and accountability.

Do digital laws cover AI content well enough?

Digital laws that are already in place are a good start, but many experts think they need to be updated to deal with problems that AI brings up.

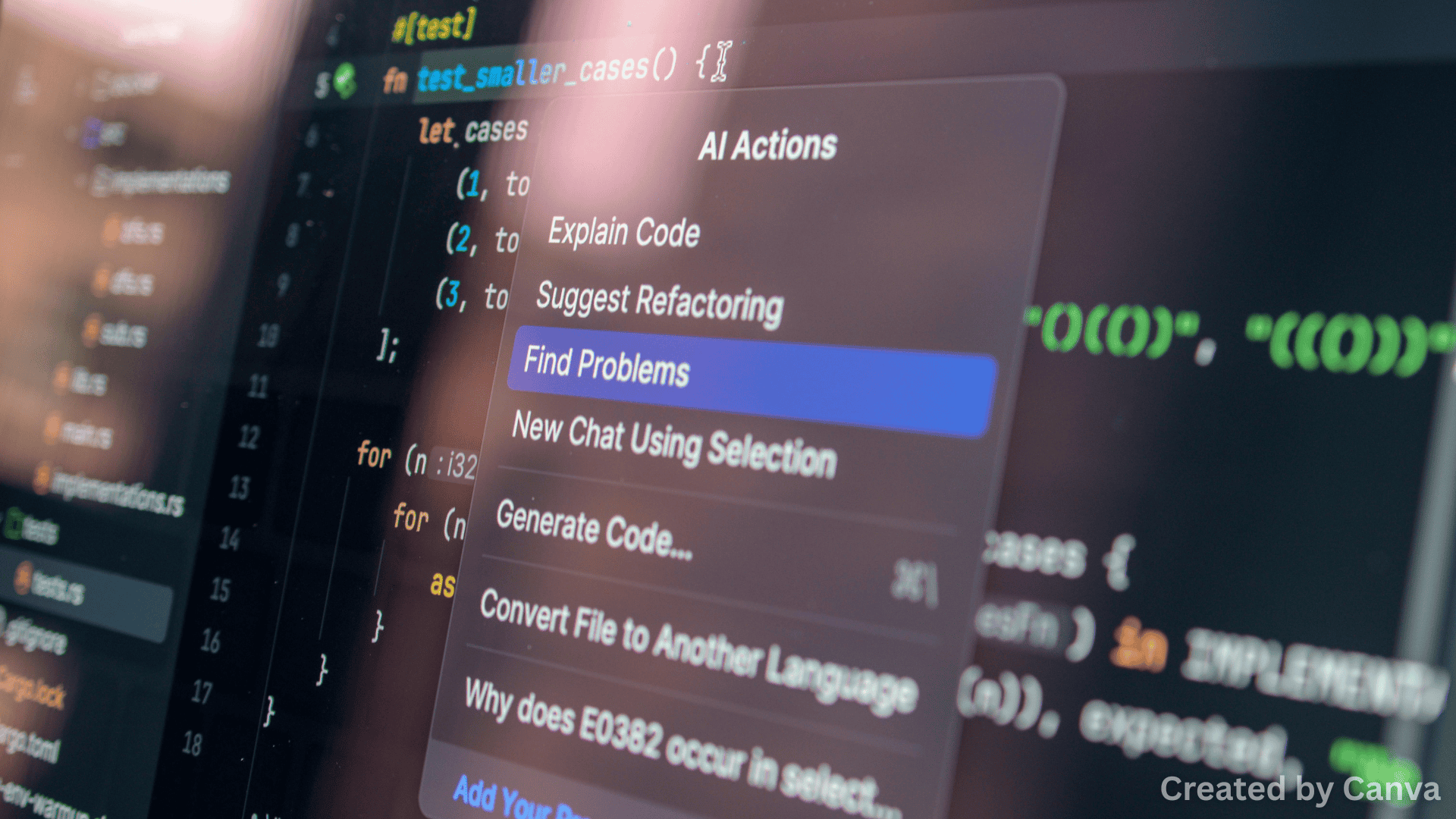

Featured Image

Images are by Canva.com